Developing a framework for health care AI

The AMA has created a framework for development and use of AI, building on AMA policy for augmented intelligence (PDF) and the latest research and viewed through the lenses of ethics, evidence and equity: Trustworthy Augmented Intelligence in Health Care.

Our vision: AI and the quadruple aim

AI enhances patient care

Patients' rights are respected and they are empowered to make informed decisions about the use of AI in their care. Research demonstrates that AI use improves clinical outcomes, quality of life and satisfaction.

AI improves population health

Health care AI addresses high-priority clinical needs and improves the health of all patients, eliminating inequities rooted in historical and contemporary injustices and discrimination impacting Black, Indigenous, and other communities of color; women; LGBTQ+ communities; communities with disabilities; communities with low income; rural communities; and other communities marginalized by the health industry.

AI improves work life of health care providers

Physicians are engaged in developing and implementing health care AI tools that augment their ability to provide high-quality clinically validated health care to patients and improve their well-being. Barriers to adoption such as lack of education on AI are overcome and liability and payment issues are resolved.

AI reduces cost

Oversight and regulatory structures account for the risk of harm from and potential benefit of health care AI systems. Payment and coverage are conditioned on complying with appropriate laws and regulations, based on appropriate levels of clinical validation and high-quality evidence, and advance affordability and access.

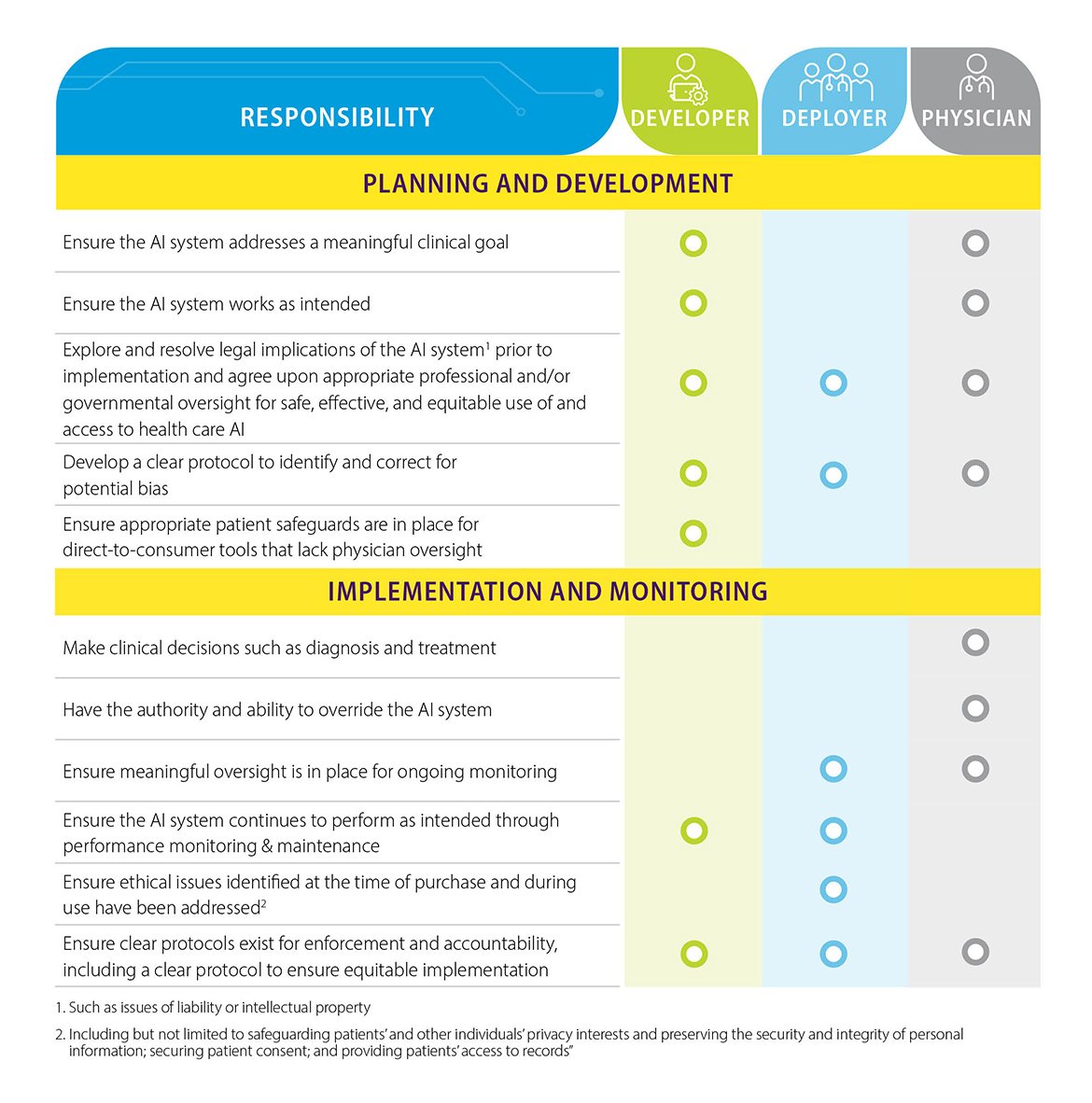

Clearly defined roles and responsibilities

Clear definition of roles and responsibilities for the following participants is central to putting the ethics-evidence-equity framework into practice:

- Developers of clinical AI systems

- Health care organizations and leaders who deploy AI systems in clinical settings

- Physicians who integrate AI into care for individual patients

Guidance for physicians

Practicing physicians can use the AI framework to evaluate whether a health care AI innovation meets these conditions.

Does it work?

The AI system meets expectations for ethics, evidence, and equity. It can be trusted as safe and effective.

Does it work for my patients?

The AI system has been shown to improve care for a patient population like mine, and I have the resources and infrastructure to implement it in an ethical and equitable manner.

Does it improve health outcomes?

The AI system has been demonstrated to improve outcomes.

AI stakeholder insights

Prior to developing this framework, the AMA consulted with key augmented intelligence (AI) stakeholder groups to elicit their perspectives on the intersection of AI and health care. It is the AMA’s aim that individuals and organizations interested in trustworthy development and implementation of AI in medicine will benefit from knowing of and considering the insights shared.

The building of a robust evidence base and a commitment to ethics and equity must be understood as interrelated, mutually reinforcing pillars of trustworthy AI.

Need for transparency

Several stakeholder groups spoke to the need for transparency and clarity in the development and use of health care AI, specifically with respect to:

- The intent behind the development of an AI system.

- How physicians and AI systems should work together.

- How patient protections such as data privacy and security will be handled.

- How to resolve the tensions between data privacy and data access that limit the data sets available for training AI systems.

Establishing guardrails and education

Some interviewees emphasized the importance of establishing guardrails for validating AI systems and ensuring health inequities are not exacerbated in development and implementation. Education and training efforts are also needed to increase the number and diversity of physicians with AI knowledge and expertise.

Translating principles into practice

Together these various considerations suggest responsible use of AI in medicine entails commitment to designing and deploying AI systems that:

- Address clinically meaningful goals.

- Uphold the profession-defining values of medicine.

- Promote health equity.

- Support meaningful oversight and monitoring of system performance.

- Recognize clear expectations for accountability and establish mechanisms for holding stakeholders accountable.

AMA Code of Medical Ethics guidance

The Code of Medical Ethics provides additional related perspectives on ethical importance in health care.

- Code of Medical Ethics Opinion 1.1.6: Quality

- Code of Medical Ethics Opinion 1.2.11: Ethically Sound Innovation in Medical Practice

- Code of Medical Ethics Opinion 11.2.1: Professionalism in Health Care Systems